In the past, we have talked about how commonly used measures are not valuable and how we benchmark our tool with non-gameable measures. However, we have never really connected analytics back to value - why should we care about analytics? Why should our customers? What could go wrong?

Why Analytics?

At Codeium, we love analytics. Not just because we are very analytical people that love numbers and charts, but because good analytics can be an enabler for a number of very positive outcomes for our product and business.

The obvious one is to track progress and make sure that the product is actually getting better. This is not just useful for us as product developers, but also for our customers, who are hoping that we will continue to ship improvements to the AI that end up driving more business value and outcomes.

That being said, analytics are helpful even past tracking raw numbers.

For one, the ability to slice and filter analytics leads to insights that can turn into actionable plans to increase adoption. Notice that one team is getting a lot more value than others from the tool? If all of the teams have received the same training, perhaps this team’s stack (IDE, language, frameworks, etc) is more amenable to our AI assistance at the particular company, and the company should roll out the tool to similar teams. Notice that a few developers haven’t used particular features? If similar developers are getting value from those features, perhaps there needs to be some enablement for these developers on the new features, whether it be pointing to our docs or a live workshop with Codeium experts.

Another important value for analytics is to be able to justify ROI. Especially now, when there are so many questions on what value AI is actually driving, we need to arm our customers during pilot evaluations to be able to quantify the impact as easily and accurately as possible. If we are to ask our customers to pay us money for the tool, it is our responsibility as vendors to clearly articulate the value that our customer should expect to see in return, and there is no better way to do that than to show real analytics from their own developers during a pilot period.

Codeium’s Analytics

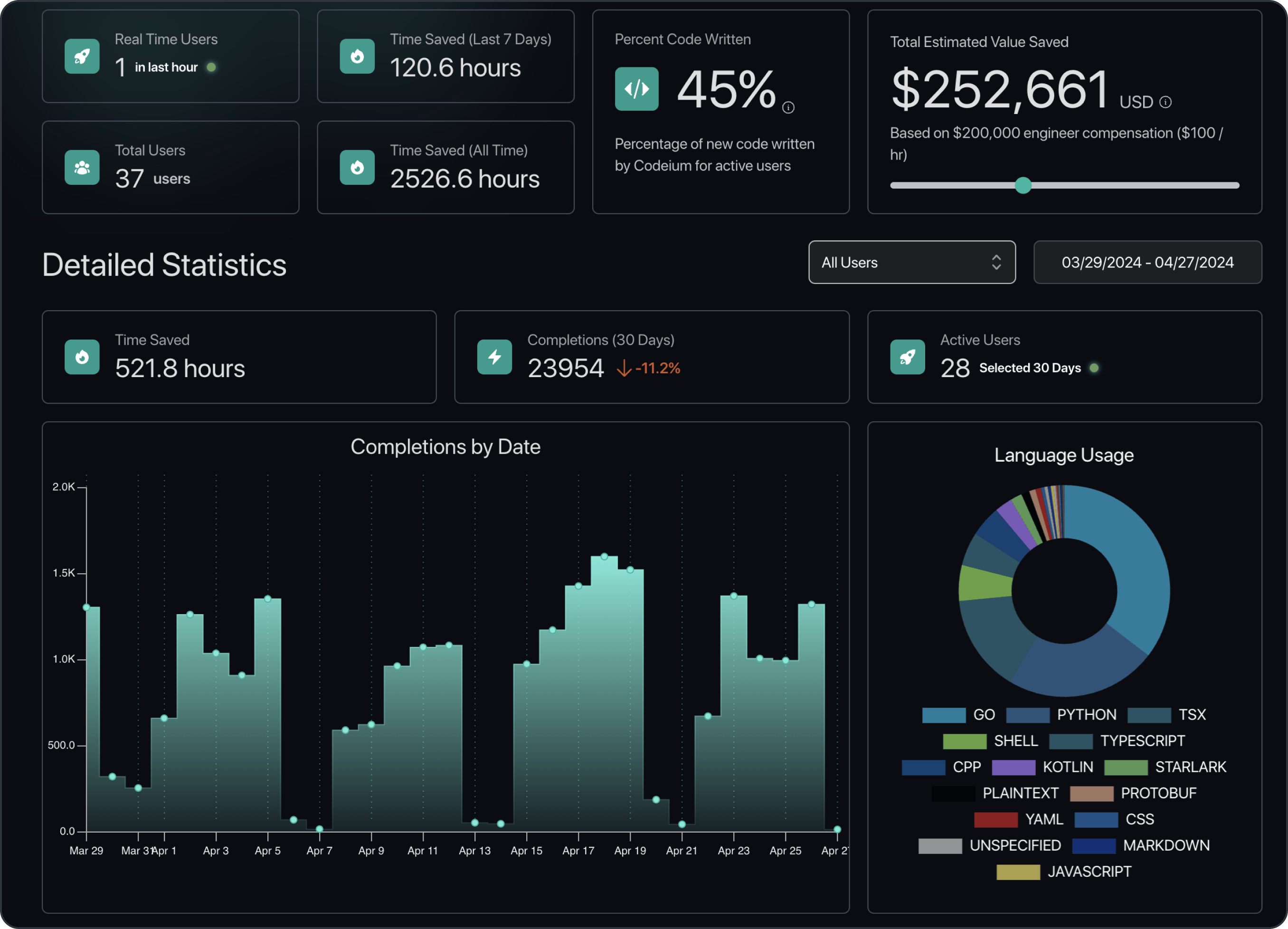

So what analytics does Codeium provide? Well, here’s a quick screenshot of part of our analytics dashboard:

Percentage of Code Written

Our golden metric for our customers is what percentage of new code is generated, accepted, and retained in the codebase from Codeium without modification. So, if someone accepts a suggestion that is 5 lines long and deletes/modifies 3 of them before committing, we only give Codeium credit for the 2 two lines that were unmodified. On our individual user base of 700K+ developers, we see 44.6% of newly committed code coming unmodified from Codeium.

Percentage code written (PCW) is a great proxy since it is a metric that you cannot game, but we will be the first to say that this is not actual productivity. For this, we suggest customers to look at metrics such as pull request cycle times, which we do not have access to from Codeium. We have consistently seen PCW and pull request cycle times correlate with each other, with a ratio of roughly 2.5 to 1 today. That is, for every 25% of PCW, the developers at the company see a 10% reduction of pull request cycle times on average. A Fortune 50 enterprise customer of ours has seen a 43% PCW while simultaneously measuring a 17% decrease in pull request cycle times.

PCW is not a perfect metric. On one hand, it is conservative as it only includes code generated from Autocomplete and Command, and not from modalities such as Chat. On the other hand, as an in-editor product, Codeium can measure PCW within sessions, but will not catch situations such as edits to AI-generated and committed code by other authors or in future commits (no cross-commit tracking). Even with these caveats, we have found it to be very strong as a directionally accurate metric (increasing PCW means that you are getting more value), and one that is generally accurate with the exception of these couple of edge cases.

It should also be clear why PCW is not actual productivity! Unfortunately, in marketing materials, many code assistant vendors muddle metrics such as acceptance rate with value propositions such as developer productivity, but we understand the limitations of the metrics that we display. Often, the code being accepted leans towards boilerplate code, not the complex code logic that takes more time to think through. Also, PCW measures value in the editor, but we know that developers don’t just live in the editor, so just because we speed up work in the editor by a significant percentage, we know that this work does not represent all of the work required to generate software. Eventually, our goal is to help accelerate the entire software development life cycle, but this is why there is a 2.5:1 ratio between PCW and reduction of development cycle times.

Other Metrics, Slices, and Access

PCW is not the only metric that we show. On our administrator analytics dashboards, we show counting statistics like number of autocomplete acceptances, number of chats, etc, as well as breakdown across time, IDE, and programming language. We break down the tasks of Chat messages (documentation, explaining, refactoring, etc), and we show how many days an individual has used Codeium and their last time of use. On the individual end, a developer can go to their profile page and see their personal counting statistics around autocomplete, chat, and language use.

In terms of slicing, the most powerful slicing capability that we offer is by team. Any organization can define teams using their existing IAM provider (so that the single source of truth of user groups remain in your IAM solution), which we can then read and display all of these statistics for, filtered on each team. Individuals can belong to multiple teams - “teams” is used here relatively loosely to represent any group of individuals, so you can have teams based on organization lines, geographies, tech stacks, project types, and more. This gives the organization flexibility to really analyze who is getting value, and how.

Finally, closing the thought in the section on PCW, we know that while we can provide unique metrics due to our integration into the editor, we cannot tell the entire story around developer productivity, and that there are likely many existing internal dashboards on this topic. So, we provide an API to all of the analytics that appear in our dashboards so that organizations can combine our data with existing data.

How Analytics can go Wrong

While the theoretical value of analytics is unambiguous, the art of analytics comes down to what metrics we are calculating and we are surfacing them, both of which could lead to poor outcomes if done incorrectly.

The first, making sure we are calculating the right metrics, is one that we have talked about a number of times. For example, we don’t calculate acceptance rates because that is a metric that we clearly know does not correlate in any way to real productivity. The problem with the wrong metrics, however, extends past just not being useful or actionable. Metrics are naturally what everyone optimizes against. If we are computing and surfacing a metric that does not align with value, then we could make decisions that actually erode true value, all while convincing ourselves that things are getting better because the metric is improving. Similarly, users and customers will expect the metric numbers to increase with time.

Now that code assistants have been around for a while, we can actually see that tools that have been focusing on acceptance rate have not really changed in end experience, even though we are sure there was the best intent to increase value and even though there are significantly better models and complementary systems today! While we are not privy to decisions made by these teams, we would not be surprised if at least part of the decision making was motivated by trying to increase acceptance rates. If that is the key metric to optimize, one might make decisions such as offering fewer suggestions or shorter suggestions.

The second way that analytics could go wrong is on how we surface metrics. There is a fine line between actionable and over-reporting. We want to provide administrators the ability to take action to improve adoption of and value from Codeium, but there are many ways we could over-report. The obvious one is reporting usage statistics at the individual developer level. Not only does this seriously erode trust with the end users because this comes across as surveillance as opposed to assistance, but also developers will just start changing their behavior to game the metrics. At that point, the value of the tool has been rendered meaningless.

Future of Codeium Analytics

Almost all of our analytics have been motivated from real customer feedback on what would be actionable to them. So, as we get more customers, it is likely no surprise that our analytics will continue to evolve.

We look forward to giving finer-grained feature usage metrics, deeper analysis on how Codeium is helping specific task types (e.g. unit testing, debugging, documentation, code modernization, etc), auto-generated insights and actionable next steps, and more.

We believe we have today’s leading analytics platform out of any AI code assistance tool, and will continue to drive more quantitative insights to our customers.